NaaP Analytics: Project Completion Report

NaaP Analytics: Project Completion Report

By the Cloud SPE Team — Livepeer.Cloud

Executive Summary

The Cloud SPE has completed delivery of the Network-as-a-Product (NaaP) MVP — SLA Metrics, Analytics, and Public Infrastructure project, funded by the Livepeer Treasury. This post provides a detailed accounting of what was proposed, what was built, how the architecture works, and what impact this has on the Livepeer network going forward.

Work began in November 2025 with the original proposal and discovery process. The revised pre-proposal was submitted in January 2026, passed the treasury vote, and execution proceeded through three milestones culminating in April 2026.

What Was Promised vs. What Was Delivered

Milestone 1 — Metrics Collection & Aggregation (February 2026) ✅

Promised:

- Define and implement the minimal metrics set

- Aggregate existing telemetry into a unified analytics layer

- A basic dashboard showing sample data flowing end to end

Delivered:

- A comprehensive metrics catalog covering network state, stream activity, performance, payments, reliability, and orchestrator leaderboard scoring

- A Kafka-to-ClickHouse ingest pipeline using ClickHouse's Kafka Engine tables for durable, exactly-once event consumption

- Materialized views routing incoming events into tables with validation rules

- Normalized tables capturing event-family facts and rollups

- A working Grafana dashboard demonstrating end-to-end data flow from Kafka through to visual output

- Documented bootstrap schema for reproducible fresh deployments

Milestone 2 — Test Signals & Derived Analytics (March 2026) ✅

Promised:

- Deploy reference load-test gateways

- Launch a public dashboard with core views

- APIs for ecosystem consumption

Delivered:

- Reference load-test gateway operational, generating consistent AI pipeline performance signals

- Four production Grafana dashboards:

- System health overview

- Real-time stream activity

- Payments and revenue metrics

- FPS, latency, WebRTC performance

- Additional supply inventory dashboard tracking GPU capacity across the network

- A REST API with endpoints covering many requirement specs:

- Network state and orchestrator profiles

- Stream activity and job performance

- Payment and economics data

- Reliability and SLA scoring

- Orchestrator leaderboard with composite scoring

- GPU supply inventory and capacity

- OpenAPI specification embedded in the API service with Swagger UI

- Prometheus metrics endpoint for operational monitoring of the API itself

Milestone 3 — Stabilization & Review (April 2026) ✅

Promised:

- Harden infrastructure for reliability and cost efficiency

- Document metrics, assumptions, and known gaps

- Review outcomes with the community to determine next steps

Delivered:

- Full production deployment across multiple infrastructure nodes with separated concerns:

- Kafka broker, MirrorMaker2 for replicating events from Confluent Cloud, and a full ClickHouse + API + Grafana stack

- Traefik reverse proxy with automated TLS via Cloudflare

- Prometheus monitoring with 180-day retention policy

- Resolver service — a custom service that publishes corrected current and serving state into canonical stores

- dbt semantic layer publishing canonical and API views over normalized tables, with automated tests

- 31 data-quality validation tests in a scenario-based test harness

- Comprehensive documentation suite (see below)

Architecture Deep Dive

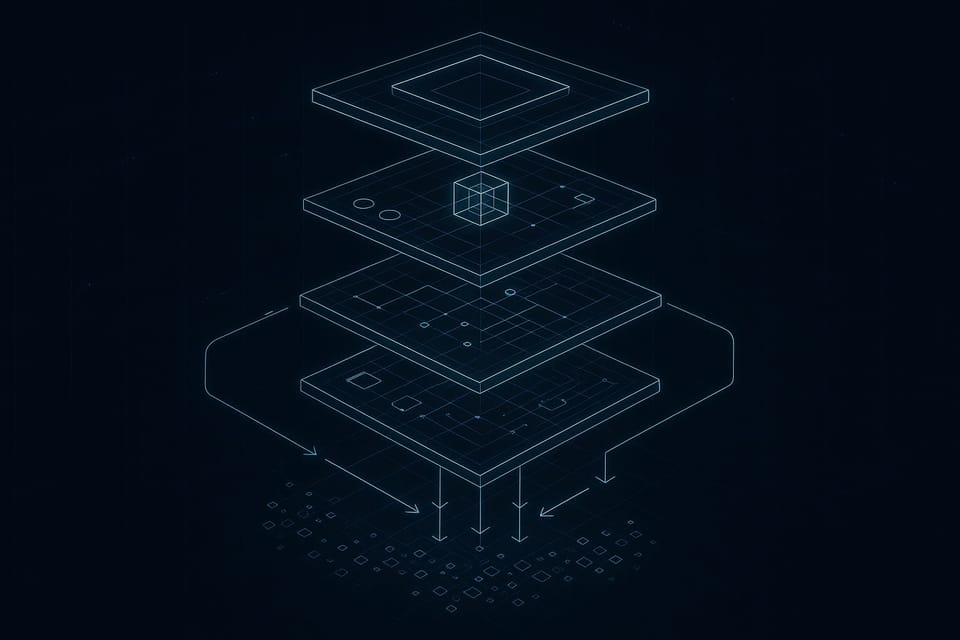

The NaaP Analytics platform follows a layered architecture designed for clarity, auditability, and extensibility:

Kafka Topics

↓

ClickHouse Kafka Engine Tables

↓

Ingest Materialized Views → accepted_raw_events / ignored_raw_events

↓

Normalized Tables (event-family facts + rollups)

↓

Resolver Service → canonical_*_store / api_*_store

↓

dbt Semantic Layer → canonical_* / api_* views

↓

Go REST API + Grafana Dashboards

Key Architectural Decisions

ClickHouse + Kafka Engine — We chose ClickHouse as the analytics store for its columnar storage efficiency and native Kafka engine support. This eliminates the need for a separate ETL service — ClickHouse consumes directly from Kafka topics, and materialized views handle routing and validation in a single step.

REST/JSON API Design — The API follows a straightforward REST design with no authentication required for public read endpoints. This maximizes accessibility for ecosystem teams while keeping the door open for org-scoped access in the future.

Tiered Serving Contract — Data flows through defined tiers: raw → normalized → canonical (resolver) → semantic (dbt) → API. Each tier has explicit contracts about freshness, correctness, and derivation rules. The resolver publishes "corrected" state — reconciling event ordering, handling late-arriving data, and producing a consistent current-state view.

The Resolver

The resolver deserves specific mention. It's a separate service that:

- Reads from normalized tables

- Computes corrected current state (handling out-of-order events, deduplication, state transitions)

- Publishes to canonical store tables

- Supports three run modes:

bootstrap(full historical rebuild),tail(real-time processing), andauto(bootstrap then tail) - Includes repair capabilities for specific time windows

This is the component that transforms raw event noise into trustworthy, queryable state. Without it, dashboards would show inconsistencies from event ordering, network partitions, or delayed telemetry.

Enrichment Layer

The API includes a polling worker that enriches raw orchestrator data with:

- ENS name resolution

- Staking information from the Livepeer protocol

- Gateway metadata

- GPU inventory details

This ensures the API and dashboards present human-readable, contextually rich data rather than raw addresses and IDs.

Documentation Delivered

One of the project goals was to leave the community with not just working software, but a documented system that others can operate, extend, and contribute to:

| Document | Purpose |

|---|---|

DESIGN.md |

Architecture overview, layer rules, tier contracts, key decisions |

PRODUCT_SENSE.md |

Product goals, success criteria, non-goals |

PLANS.md |

Phase planning and implementation status |

metrics-and-sla-reference.md |

Community-facing metrics reference: formulas, SLA targets, glossary |

architecture.md |

Layer rules and enforcement model |

system-visuals.md |

Mermaid diagrams: ingest flow, resolver, deployment topology |

data-validation-rules.md |

Behavioral contract for all 17 validation rules (31 tests) |

operations-runbook.md |

Deployment, alerting, troubleshooting, maintenance, backups |

devops-environment-guide.md |

Monitoring, local/production environment setup |

data-retention-policy.md |

Kafka and ClickHouse retention windows, replay strategy |

infra-hardening-runbook.md |

Security posture, Kafka listener architecture |

incident-response.md |

Severity definitions (P0–P3), escalation contacts, post-mortem template |

run-modes-and-recovery.md |

Resolver run modes, failure recovery, rebuild procedures |

compose-services.md |

Docker Compose services, profiles, and responsibilities |

Every product spec is individually documented with requirement traceability.

Impact on the Livepeer Network

Immediate Impact

-

Visibility: For the first time, anyone can see how the Livepeer AI network is performing — not from marketing materials, but from live data sourced from real workloads and standardized test signals.

-

Comparability: Orchestrators can be compared on a level playing field — same metrics, same methodology, same data pipeline. The leaderboard scoring model uses composite metrics that reward both performance and reliability.

-

Ecosystem Integration: The public APIs and data model are designed for consumption. The NaaP platform itself (livepeer/naap) is an architecture where new views, tools, and integrations can be built independently by any team.

-

Operational Maturity: The Livepeer network now has documented SLA metrics, a metrics glossary, formulas for reliability and performance scoring, and a reference implementation of how to collect, validate, and serve this data. This is the kind of infrastructure that enterprise evaluators look for.

Foundation for Future Work

This project intentionally did not attempt to:

- Enforce SLAs or modify protocol incentives

- Introduce new routing logic

- Make protocol changes

These are all logical next steps, and they all depend on the measurement layer we've now established. Specifically, this enables:

- SLA-aware job routing — gateways can use performance data to route jobs to orchestrators that meet specific reliability or latency thresholds

- Network quality scores — aggregate metrics that can be published to the Livepeer Explorer or consumed by third-party evaluation tools

- Treasury accountability — future funded projects can point to observable metrics as evidence of impact

- GPU market intelligence — the supply inventory data provides a real-time view of network capacity, useful for both gateway operators planning workloads and orchestrators positioning their hardware

Lessons & Insights from the Build

Community Feedback Made This Better

The original pre-proposal (October 2025) was more ambitious — it included decentralized data transport via Streamr.Network, a larger budget, and broader scope. The community's feedback was pointed and constructive: too much scope, too much cost, unnecessary architectural risk. That feedback led to a complete reset. The revised proposal dropped Streamr, cut the budget, simplified the architecture, and focused on a thin but complete MVP. The result is better software shipped faster.

Existing Infrastructure Is an Asset

A key design principle was reusing existing Livepeer infrastructure wherever possible. Telemetry data from gateways and orchestrators already existed — it just wasn't aggregated or publicly accessible. By building on top of what was already there (gateway events, orchestrator telemetry, Kafka streams from Confluent Cloud), we avoided building a new event pipeline from scratch.

The Resolver Pattern Pays Off

Early in development, we faced the classic analytics challenge: raw events are noisy, out of order, and inconsistent. Rather than trying to fix this at the ingest layer (which would add latency and complexity), we built a separate resolver service that computes correct state from normalized events. This separation of concerns kept the ingest pipeline fast and simple while giving the API layer clean, trustworthy data. The resolver's repair mode — the ability to reprocess a specific time window — has already proven invaluable during development and will continue to be useful operationally.

Documentation Is a Deliverable

We treated documentation as a first-class deliverable, not an afterthought. Every architectural decision is recorded in an ADR. Every operational procedure has a runbook. Every validation rule has a behavioral contract. This matters because this is community infrastructure — it needs to be operable by people who didn't build it.

Right-Sizing Is an Art

The leaner budget forced discipline. We couldn't build everything, so we built the right things. The metrics catalog is minimal but sufficient. The API covers well-defined requirement specs. The dashboards address four key operational domains. Every component earned its place. This is a pattern we'd recommend to any SPE: start with the smallest thing that proves value, then let the data make the case for further investment.

Open Source

All code is available at github.com/Cloud-SPE/livepeer-naap-analytics under an open source license. The repository includes:

- Complete Go API source OpenAPI specs

- Resolver service source

- dbt warehouse models with tests

- ClickHouse schema and migrations

- Grafana dashboards (JSON)

- Prometheus configuration

- Docker Compose stacks for local development

- Production deployment configurations for Docker / Portainer

- Full documentation suite

- Data validation test harness

Acknowledgments

This project exists because of the Livepeer community. The feedback on the original pre-proposal — from DeFine, Karolak, vires-in-numeris, j0sh, Authority_Null, rickstaa, dob, and others — was direct, constructive, and ultimately made the project significantly better. Mehrdad from the Livepeer Foundation provided ongoing guidance and confirmed alignment with the network observability roadmap. Qiang Han from Livepeer Inc endorsed the proposal and committed to collaboration throughout execution. honestly_rich championed transparency and accountability throughout the process.

This is what community-driven governance looks like when it works.

The NaaP Analytics platform is live and open source. Review the code at github.com/Cloud-SPE/livepeer-naap-analytics, explore the proposal thread, and reach out in Livepeer Discord with questions or ideas for what to build next.